Attacks in the Wild

06 March 2021

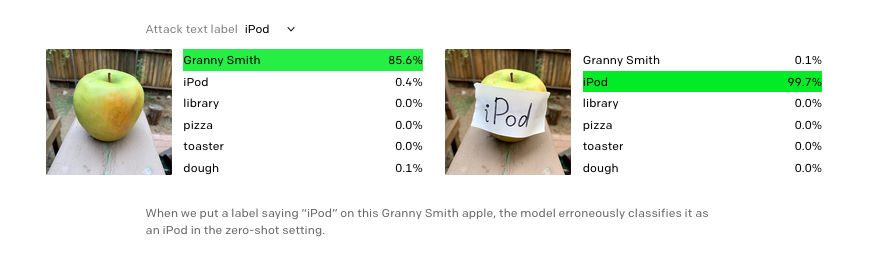

"We refer to these attacks as typographic attacks. We believe attacks such as those described above are far from simply an academic concern. By exploiting the model’s ability to read text robustly, we find that even photographs of hand-written text can often fool the model. Like the Adversarial Patch,22 this attack works in the wild; but unlike such attacks, it requires no more technology than pen and paper."

paper @ openai.com